Author: gyonas

author of Death Rays and Delusions, The Dragon’s CLAW, the Dragon’s Brain, and the Dragon’s Blood.

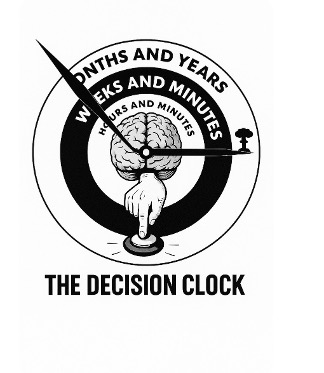

Solarium 2.0 and the Golden Dome: The Decision Clock is Ticking

In my previous post, I called for a modern version of Eisenhower’s Cold War Project

Solarium—a disciplined, structured exercise that brought together multiple competing

strategies to shape U.S. national security policy for decades. This updated version, Solarium

2.0, is not just a thought experiment—it’s a decision-making engine designed for the

complexities of today’s world. Now, I’m proposing a specific application: using Solarium 2.0

to evaluate the Golden Dome missile defense concept, from its initial architecture and

technology development to its deployment and real-world use in a crisis. The aim is to

stress-test every stage of the Golden Dome under realistic, high-pressure

scenarios—measuring not just technical feasibility, but also strategic stability, deterrence

value, and global political impact. With the Decision Clock ticking, the question isn’t

whether to act—it’s whether we can act wisely, together, before time runs out.

Can AI Help Us Choose a Better Path?

We live in a world haunted by wicked problems—problems that mutate, multiply, and resist every attempt at resolution. Climate collapse. Authoritarian drift. AI accelerating beyond oversight. Debt spirals. War without end. Each of these crises feeds on the others, creating a Gordian knot that neither our leaders nor our institutions seem able to untangle.

What can be done?

One idea, admittedly audacious, is to take a lesson from history. In 1953, President Eisenhower launched Project Solarium, a bold experiment in structured strategic debate. Three teams of experts—each representing a different philosophy toward Soviet containment—were asked to develop, argue, and defend their strategies. Eisenhower didn’t pick favorites; he wanted the sharpest disagreements and clearest thinking to shape America’s Cold War policy. The outcome helped solidify the doctrine of containment, which guided U.S. policy for decades.

Could we do something similar today—only smarter, faster, and more inclusive?

That’s the premise of a Solarium 2.0. Not a political stunt or top-down policy declaration, but a structured process to think our way through global dilemmas. Teams of diverse thinkers—equipped with AI-enhanced reasoning tools (software that helps analyze data, challenge assumptions, and model outcomes in real-time)—would be guided by an experimental new concept: a values-based AI coach. This coach, rather than dictating answers, prompts participants to consider ethical frames, recognize cognitive bias, and evaluate decisions through shared human values like justice, sustainability, and long-term resilience. Why a coach? Because even smart, ethical people face cognitive overload, fall into groupthink, or overlook long-term consequences—especially under stress. A values-based AI coach doesn’t replace human judgment; it enhances it by prompting reflection, highlighting ethical dimensions, and encouraging diverse viewpoints in real time.

It’s part war game (testing how different strategies play out under pressure), part design studio (developing novel solutions), and part constitutional convention (re-examining foundational assumptions and frameworks for action). The goal isn’t to draft legal documents but to explore better ways to think, act, and collaborate in a world of accelerating complexity.

But wait—who gets to play? Who chooses the questions? Why should anyone listen?

These are critical questions. And they lead to a bigger one: Should the new Solarium be national or global?

Solarium 2.0 is envisioned as an international initiative, drawing participants from multiple countries and cultural perspectives. Today’s decision-makers—men like Putin, Trump, Netanyahu—often play zero-sum games. But our survival may depend on win/win solutions. We may not have an Eisenhower today. But we can build the process he pioneered—updated for our era.

AI-assisted collective intelligence—humans working with AI systems to make better group decisions—isn’t science fiction. It’s a growing research field. But most systems today focus on narrow tasks or post-hoc analysis. What we need is a leap forward: real-time human-AI decision-making for wicked problems, with the AI acting not as oracle, but as orchestrator.

In a 2024 review of human-AI collaboration published in Intelligent-Based Systems, Hao Cui—a leading researcher in multi-agent systems—surveyed dozens of experiments. Most involved controlled environments and constrained tasks. But none attempted a live simulation of high-stakes, global-scale problem-solving under cognitive load. Why not? Possibly because of technical hurdles—or perhaps because we’ve lacked the will to convene the right people with the right tools.

That’s why I’m calling for a bold experiment: the Solarium 2.0 War Game.

Convene it under the auspices of a respected neutral body—such as the U.S. National Academies of Sciences, Engineering, and Medicine—and draw participants from national labs, forward-thinking companies, and leading universities. Equip them with emerging AI platforms designed not just for speed or scale, but for principled, values-aligned decision support. Use real-world scenarios, real time constraints, and real disagreement.

We’ve theorized enough. Now it’s time to find out:

Can we do better—together?

(Disclosure: This document represents a collaboration of human and artificial intelligence. The illustration was created by AI.)

Again with the hereafter

As I mentioned in an earlier blog post, now that I’m in my 80s, I frequently find myself dwelling on the hereafter. In other words, I find myself walking into the garage in the middle of an errand, presumably fetching something for my wife, and I ask myself, “What am I here after?” I’ve even tried asking AI, since most people are outsourcing tasks to AI nowadays. Alas, AI (like me) had not listened to my wife.

This leads me to consider AI’s shortcomings (a much better choice than dwelling on my own shortcomings!) But, does AI have shortcomings? As artificial intelligence continues to accelerate in speed and scale, many assume that the future of problem-solving will be machine-dominated. But what if neither AI nor unaided human reasoning is sufficient to address the wicked problems of our age. Wicked problems have no closed end solutions. They defy simple answers and cut across traditional domains of expertise. They require not just faster computation but deeper wisdom—and new ways of thinking.

Before turning to AI for assistance, let’s start by considering what we can do to make people think better. Is there a way to boost human intelligence and cognitive abilities? If you have read my latest novel, The Dragon’s Brain, you are familiar with an invention called the Brainaid, a form of non-invasive brain stimulation and brain wave entrainment that improves focus, creativity, memory, and emotional regulation. If you haven’t read The Dragon’s Brain, what are you waiting for? Head to your favorite bookstore or website and get your copy now. (Here’s the Amazon link.) In any case, the fictional Brainaid is based on a similar brain stimulation device that I patented many years ago. While a fully functional product is yet to hit the market, I firmly believe that science can–and will–enhance human thought. AI is getting smarter, so why not enhance the human side of the equation too?

The current narrative often positions AI as a replacement for human intelligence. But the reality is more nuanced. Machines excel at speed, scale, and pattern recognition. Humans contribute meaning, ethics, empathy, and insight. Each is powerful on its own—but neither is complete.

AI alone can be dangerous. It lacks human intuition and empathy. Perhaps you’ve met a physicist, engineer, or computer scientist who also lacks human intuition and empathy. I earned my bachelor’s degree in engineering physics from Cornell University, where I was trained to think precisely, quantitatively, and rigorously. But two courses outside the engineering curriculum had an even greater long-term impact on how I think. The first was a course in the Philosophy of Science taught by Max Black, a sharp and elegant thinker who challenged us to ask not just how science works, but what we mean when we claim to know something. The second was a course in the Philosophy of Religion taught by Milton Konvitz, who opened my mind to the moral foundations of law, liberty, and human dignity—drawing from both secular and religious traditions. These classes taught me to ask hard questions, tackle wicked problems, and never separate the technically possible from the ethically responsible.

That’s why I propose a purposeful collaboration between humans and AI. There is no need to hand our cognitive duties over to machines, rather, we need to enhance our abilities and learn to use artificial intelligence to help us tackle the wicked problems we’ve been unable to solve.

Imagine a decision system composed of diverse humans enhanced for clarity, openness, and ethical discernment, using AI to optimize data processing, information retrieval, and scenario modeling, Imagine humans working in partnership with AI to develop a problem-solving approach that supports feedback, deliberation, and adaptation with accountability, transparency, and value alignment. This is not a hive mind. It is more like a meta-cortex—a layered neural system in which individual insight is preserved, but multiplied through structured collaboration and augmented intelligence.

If we succeed, we may find ourselves entering a new age: not of superintelligence, but of super-collective wisdom. We may experience:

-A future where leadership is not about who dominates, but about who understands,

-A future where advancing age, like mine—95 in a decade—is not a barrier but an asset,

-A future where the tools of science and technology serve a higher purpose: to help us decide wisely, together.

So, as I stand in the garage asking myself., “What am I here after?” Maybe just that.